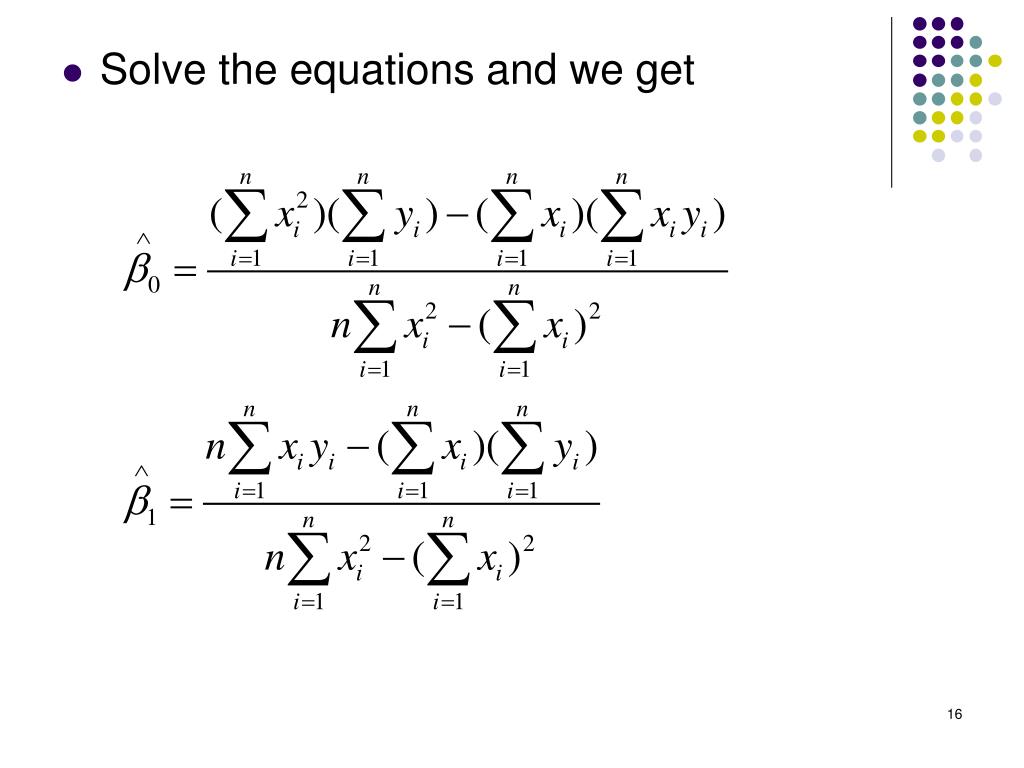

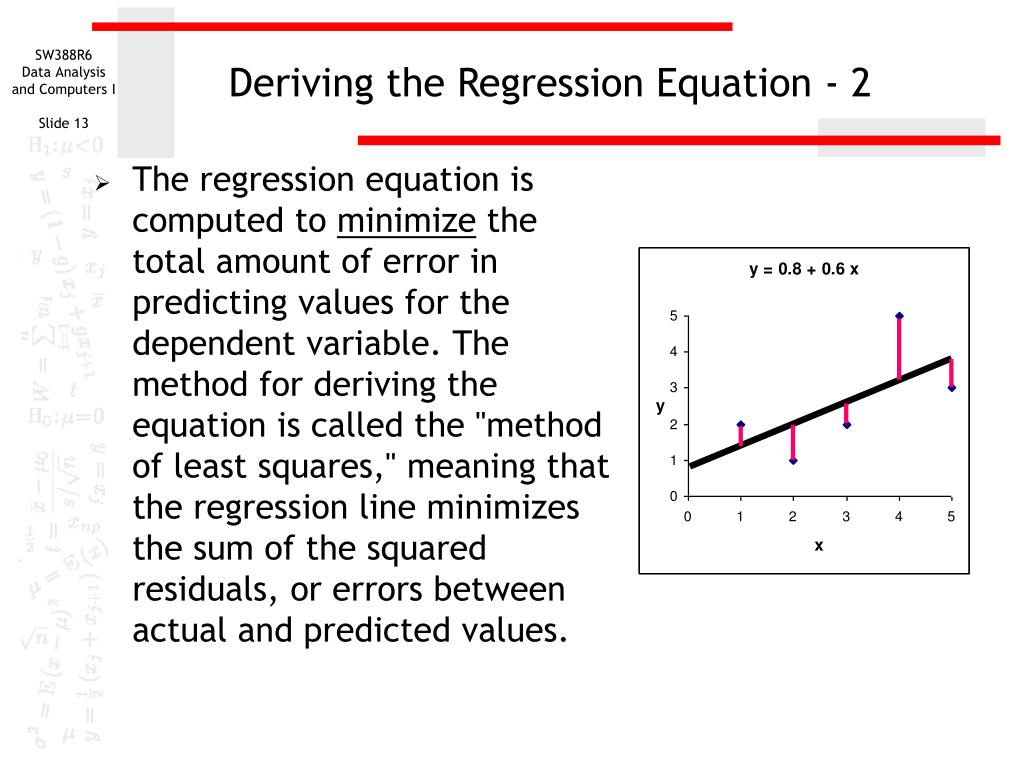

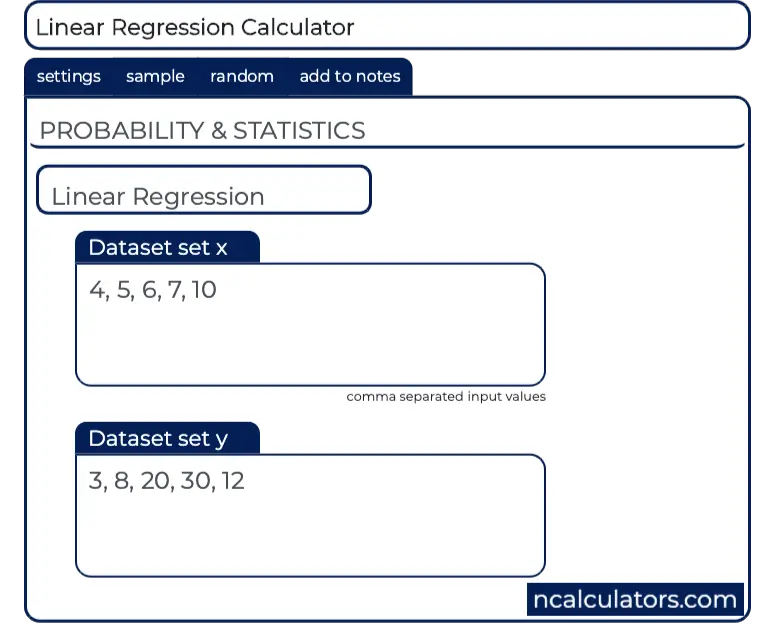

: Illustration showing three data points and two possible straight-lines that might explain the data. How do we decide how well these straight-lines fit the data, and how do we determine the best straight-line? Figure 5.4.2 SSE is the sum of the numbers in the last column, which is 0.75. The computations were tabulated in Table 10.4.2. SSE was found at the end of that example using the definition (y y)2. , which shows three data points and two possible straight-lines that might reasonably explain the data. The least squares regression line was computed in 'Example 10.4.2 ' and is y 0.34375x 0.125. where 0 is a constant, 1 is the regression coefficient, X is the. The population regression line is: Y 0 + 1 X. Suppose Y is a dependent variable, and X is an independent variable. Lets explore the differences between these two approaches: Simple Linear Regression: Simple linear regression involves analyzing the relationship between two variables: one independent variable (X) and one dependent variable (Y). Linear regression finds the straight line, called the least squares regression line or LSRL, that best represents observations in a bivariate data set. To understand the logic of a linear regression consider the example shown in Figure 5.4.2 Linear regression can be categorized into two main types: simple linear regression and multiple linear regression. In such circumstances the first assumption is usually reasonable. When we prepare a calibration curve, however, it is not unusual to find that the uncertainty in the signal, S std, is significantly larger than the uncertainty in the analyte’s concentration, C std.

In particular the first assumption always is suspect because there certainly is some indeterminate error in the measurement of x.

The validity of the two remaining assumptions is less obvious and you should evaluate them before you accept the results of a linear regression. You can use linear regression to calculate the parameters a, b, and c, although the equations are different than those for the linear regression of a straight-line. The second assumption generally is true because of the central limit theorem, which we considered in Chapter 4. Another approach to developing a linear regression model is to fit a polynomial equation to the data, such as (y a + b x + c x2). For this reason the result is considered an unweighted linear regression. that the indeterminate errors in y are independent of the value of xīecause we assume that the indeterminate errors are the same for all standards, each standard contributes equally in our estimate of the slope and the y-intercept.that indeterminate errors that affect y are normally distributed.that the difference between our experimental data and the calculated regression line is the result of indeterminate errors that affect y.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed